The Silent Killer

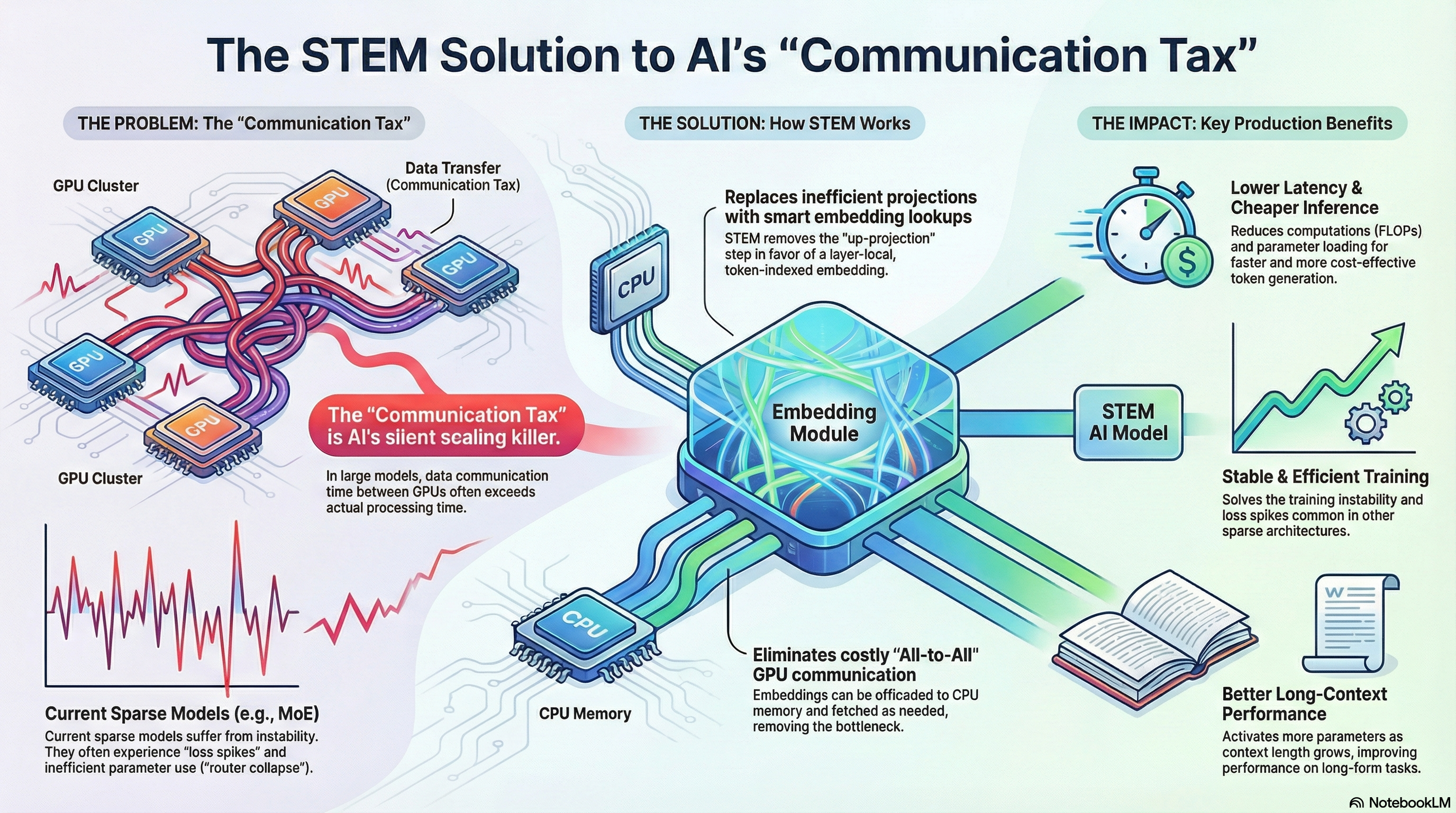

When you split a large language model across 100 GPUs, there's a cost that rarely makes it into the benchmarks: the communication tax. Every time one GPU needs information from another — to route a token, synchronize gradients, or share activations — the entire system waits. And it waits at the speed of interconnect, not the speed of compute.

This might be the silent killer of large-scale AI. Not the floating-point operations. Not the memory bandwidth. The sheer cost of GPUs talking to each other.

Why MoE Seems to Make It Worse

Mixture-of-Experts architectures were designed to solve the scaling problem by activating only a few expert sub-networks per token. But they appear to have introduced a routing paradox: to decide which expert should handle a token, the system first needs to evaluate all of them, at least partially. That routing decision requires cross-GPU communication.

When experts live on different GPUs — and with hundreds of experts, they generally must — every routing decision becomes a network call. Multiply that by billions of tokens, and the communication overhead can potentially dwarf the actual computation.

Training instability tends to follow: some experts get overloaded while others sit idle, leading to fragile load-balancing heuristics that often struggle at scale.

The MoE approach tried to make models more efficient by introducing specialization. But specialization across a distributed system creates coordination costs that seem to grow with the number of specialists.

What If We Stopped Thinking About Experts?

Here's an idea worth exploring: what if we stopped thinking about "experts" and started thinking about granularity?

An expert is a coarse-grained unit — an entire sub-network that either fires or does not. Fine-grained sparsity operates at the individual neuron level. Instead of routing tokens to large blocks of parameters, you activate only the specific neurons that matter for each computation.

The difference could be profound:

Coarse-grained routing (MoE) requires a centralized decision — "which expert handles this?" — that creates communication bottlenecks.

Fine-grained sparsity makes activation decisions locally, within each layer, based on learned patterns. No routing. No cross-GPU coordination for token assignment. Each GPU handles its own sparsity decisions independently.

The Organizational Analogy

Think of it this way: MoE is like an organization that routes every customer request to a specialized department. The routing department becomes the bottleneck.

Fine-grained sparsity is more like giving every employee the judgment to handle what they can and escalate only what they cannot. The coordination cost drops dramatically.

This might not be just a technical optimization. It could represent a fundamentally different philosophy about how intelligence should be distributed — one that seems to mirror what effective organizations have learned about the cost of coordination.

Next in this series: Is Density Dead? — how STEM architecture makes fine-grained sparsity practical, and why 70B parameters might outperform 175B.