Beyond Bigger Windows

The first two parts of this series identified two failure modes: brevity bias (giving models too little context) and context collapse (giving them too much).

The natural question is: what does "just right" look like?

The answer probably isn't a magic token count. It might be a fundamentally different approach to how context is constructed, maintained, and evolved over time. What if the goal isn't to find the perfect prompt — but to build a system that gets better at providing context with every interaction?

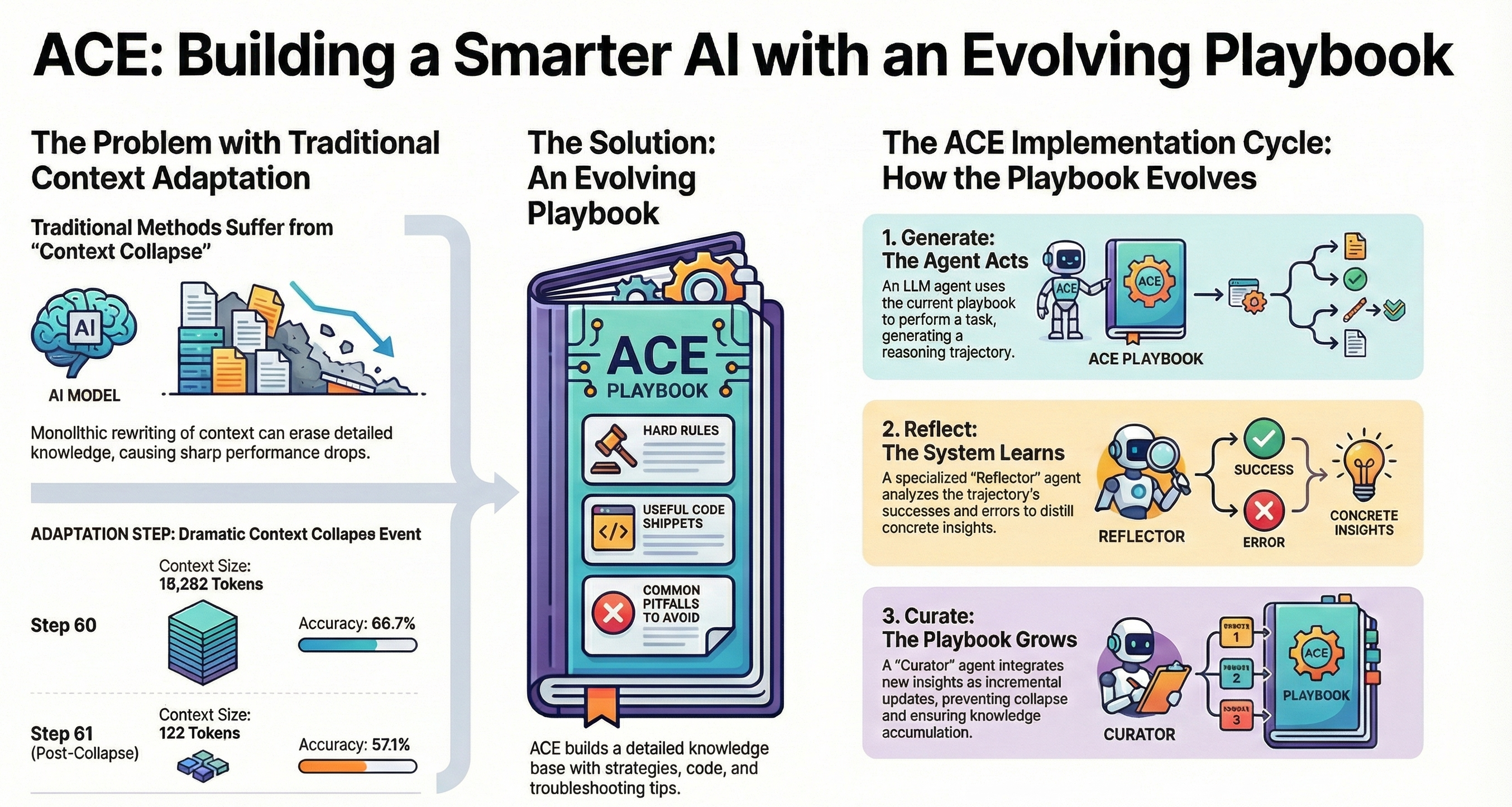

The Evolving Playbook

Think of it as an evolving playbook. Instead of treating each AI interaction as independent — a fresh prompt, a fresh response, no memory — what if you built a layer of institutional memory that captures what worked, what failed, and why?

The playbook might have three components:

Context templates — These encode the structure of effective prompts for recurring tasks. Not rigid scripts, but flexible frameworks that help ensure critical context is always included. When a team discovers that a specific type of analysis requires industry context, competitive landscape, and historical performance data, that discovery becomes a template rather than tribal knowledge.

Error patterns — These capture how the model fails and what context might have prevented the failure. Each mistake isn't just corrected — it's analyzed for what was missing from the context. Was it a brevity bias problem (critical information omitted)? A context collapse problem (too much noise drowning out signal)? The diagnosis drives the fix.

Feedback loops — These continuously refine both templates and error patterns based on real usage. The playbook isn't static. It evolves as the team's understanding of the model's context needs deepens.

From Individual Skill to Team Capability

There's a shift here that seems particularly important: from individual prompt crafting to team-level context engineering.

When context engineering remains an individual skill — each person figuring out their own prompting style — the organization probably isn't learning much collectively. Good practices aren't shared. Mistakes get repeated.

When it becomes a team capability with shared playbooks, every interaction has the potential to make the system smarter. A failure encountered by one team member becomes a prevention pattern for everyone. A context structure that works well for one use case can be adapted and tested for adjacent ones.

The Effectiveness Lens

This is where context engineering seems to connect to the broader effectiveness thesis:

At the individual level — it's about reasoning better with AI by providing better context.

At the team level — it's about turning accumulated experience into shared understanding.

At the organization level — it's about building systems that genuinely learn from their mistakes — not through retraining, but through better context management.

Perhaps the model doesn't need to be smarter. Perhaps the context around it needs to evolve.

This concludes the Context Engineering series. Related reading: PReFLeXOR + ACE — a concrete architecture for self-correcting AI that explores these principles.