The Scaling Wall

For years, the equation driving AI progress seemed unbreakable: more intelligence requires more parameters requires more compute. The scaling laws told us that if we just made models bigger, they would get smarter. And for a while, that was true.

But something has changed.

The returns are diminishing. Training costs are measured in hundreds of millions of dollars. Inference latency makes real-time applications impractical. Energy consumption has become a genuine environmental concern. And perhaps most tellingly, the performance gains from doubling model size are shrinking with each generation.

Size, it turns out, is not just expensive. Sometimes it is a liability.

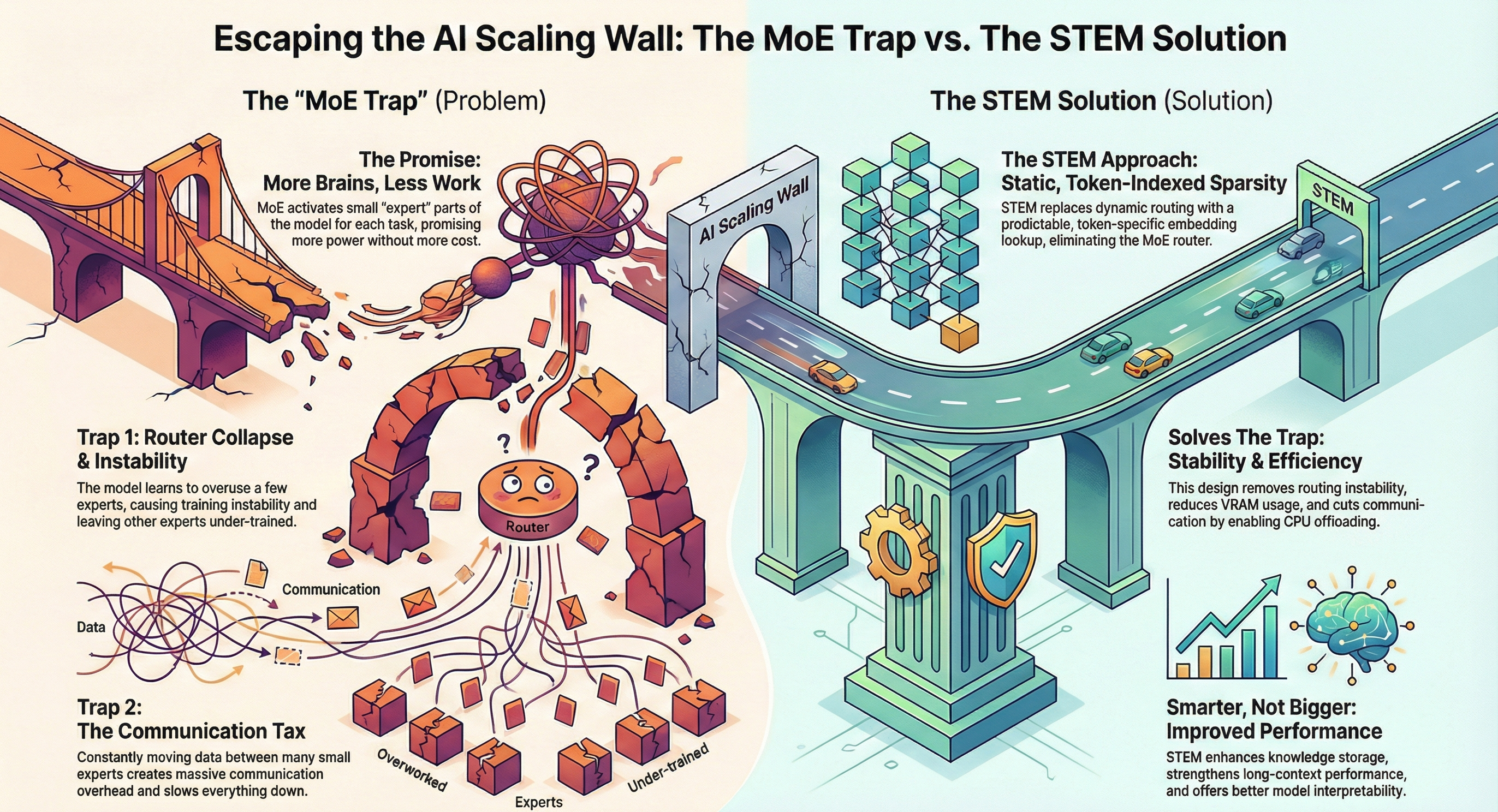

The Mixture-of-Experts Trap

The industry's first answer to the scaling wall was Mixture-of-Experts (MoE). The idea was elegant: instead of activating every parameter for every token, route each input to a small subset of specialized "expert" sub-networks. You get a model with hundreds of billions of parameters, but only a fraction fires at any given time.

In theory, this should have been the best of both worlds — the knowledge capacity of a massive model with the inference cost of a smaller one. In practice, MoE introduced its own set of problems.

The routing mechanism itself becomes a bottleneck. Load balancing across experts is fragile. And when you distribute these expert networks across multiple GPUs — which you must, given their size — you run headlong into a problem that may be even more fundamental than parameter count: the communication tax.

Why "Bigger" Broke

The deeper issue is architectural. Dense models activate every parameter for every token, regardless of whether that computation is useful. It is the equivalent of consulting every department in an organization for every decision, no matter how routine. The overhead eventually overwhelms the value.

What the scaling laws actually revealed, if we read them carefully, is not that intelligence requires size — it is that intelligence requires the right computation applied at the right time. The brute-force approach of "activate everything, everywhere, always" was never going to scale indefinitely.

A Different Question

Perhaps the question was never "how do we make models bigger?"

Perhaps it should have been "how do we make models smarter about which computations matter?"

That reframing — from scaling parameters to scaling intelligence — is what led researchers toward a fundamentally different architecture. One where sparsity is not a compromise but a feature. Where smaller models can match or outperform giants by being selective about what they compute.

The answer is not to drain the ocean. It is to learn which drops of water matter.

Next in this series: The Communication Tax — why splitting models across GPUs creates a hidden performance killer.