The Paradox of Memory

Common sense says a good memory means holding on to everything. But neuroscience tells a different story.

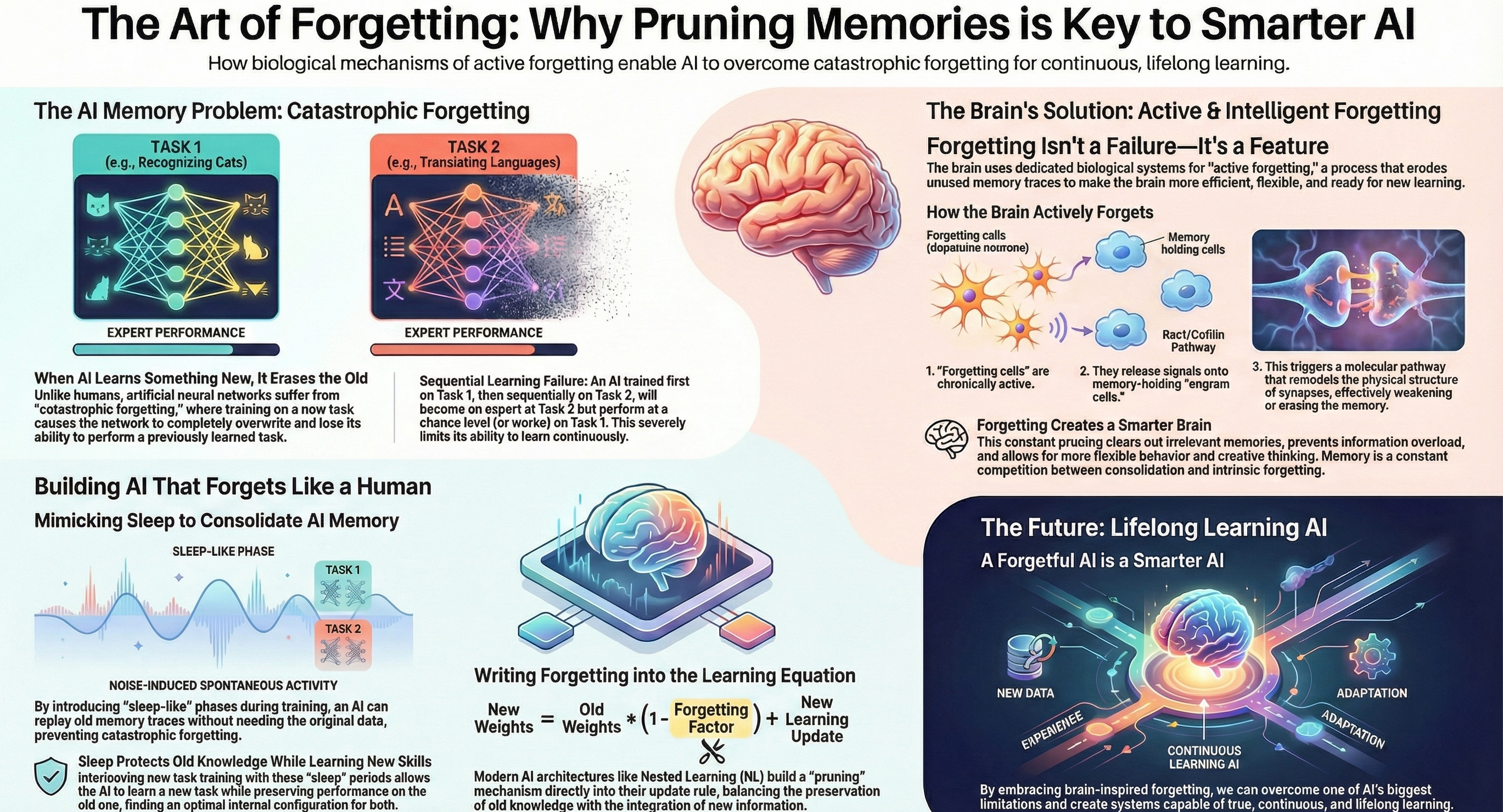

The brain is not a hard drive. It is an optimization engine — and forgetting is one of its most powerful tools. Three independent lines of research converge on the same insight: forgetting is not a bug in human cognition. It is a feature.

Forgetting Optimizes Decision-Making

A 2017 review in the journal Neuron by Paul Frankland and Blake Richards at the University of Toronto challenged a deeply held assumption about memory. The primary objective of memory, they argued, is not to record every detail but to optimize intelligent decision-making by filtering out useless data.

The research identified two biological mechanisms that actively prune memories:

Synaptic weakening — connections between neurons gradually weaken or disappear, reducing access to older memories

Neurogenesis — new neurons generated from stem cells integrate into the hippocampus, remodeling existing circuits and overwriting older memories. This explains why children, who produce new neurons at a higher rate, also forget more

Two benefits emerge from this pruning:

Flexibility — discarding outdated information prevents conflicting memories from hindering good decisions

Generalization — retaining essential patterns rather than specific details allows knowledge to transfer to new situations

This mirrors a well-known principle in machine learning: regularization. Models that memorize training data (overfitting) perform worse on new data than models that learn to generalize by discarding noise.

Forgetting Is a Form of Learning

In 2023, neuroscientists at Trinity College Dublin tested the theory that forgetting is not merely decay — it is an active, adaptive process.

Dr. Tomas Ryan and Dr. Livia Autore studied what happens when competing experiences cause recently formed memories to become inaccessible, a process called retroactive interference. Their experiments revealed something remarkable: memories labeled as "forgotten" were not erased. They became inaccessible.

Using optogenetics (light stimulation of specific neurons), the researchers successfully retrieved these apparently lost memories. More importantly, new related experiences could naturally reactivate forgotten memory traces and update them with new information.

The implications are significant. Forgetting functions as a form of learning that allows flexible behavior in a changing environment. The brain does not lose information carelessly — it strategically reduces access to information that conflicts with current needs, while keeping the underlying traces available for future reactivation.

The Biology of Active Forgetting

A 2017 perspective paper by Ronald Davis (Scripps Research Institute) and Yi Zhong (Tsinghua University) went even deeper, arguing that forgetting is an active biological process — not passive decay.

The brain possesses dedicated molecular pathways to erase memories, comparable to how cells have separate systems for building and demolishing structures. The best-characterized mechanism involves dopamine neurons releasing signals that activate the Rac1/cofilin pathway, which remodels the structural connections between neurons. This weakens synapses and degrades memory traces.

Davis and Zhong introduced the concept of intrinsic forgetting — chronic signaling systems that slowly degrade molecular memory traces as a default state. Memories survive only when actively consolidated, not when passively stored.

The brain's baseline operation is to forget; remembering is the exception that requires effort.

The Bridge to AI

What neuroscience calls selective recall, AI engineers call context pruning. The parallel runs deep:

| Human Brain | AI System |

|---|---|

| Synaptic pruning | Weight decay / parameter pruning |

| Sleep consolidation | Memory consolidation phases |

| Selective recall | Attention mechanisms / context windowing |

| Generalization through forgetting | Regularization / dropout |

The next article in this series explores how AI researchers are building these same principles into systems that learn continuously without catastrophic failure.

This article draws from research by Frankland & Richards (University of Toronto), Ryan & Autore (Trinity College Dublin), and Davis & Zhong (Scripps Research / Tsinghua University).