The Free Lunch

What if you could make a model simultaneously faster, cheaper, and more capable — not by adding parameters but by subtracting activations?

That is the promise of fine-grained sparsity, and recent research suggests it might be closer to a free lunch than anything else in deep learning.

The key insight: in a standard dense model, the vast majority of neuron activations for any given input are near zero. They contribute almost nothing to the output but consume full compute. What if you simply did not compute them?

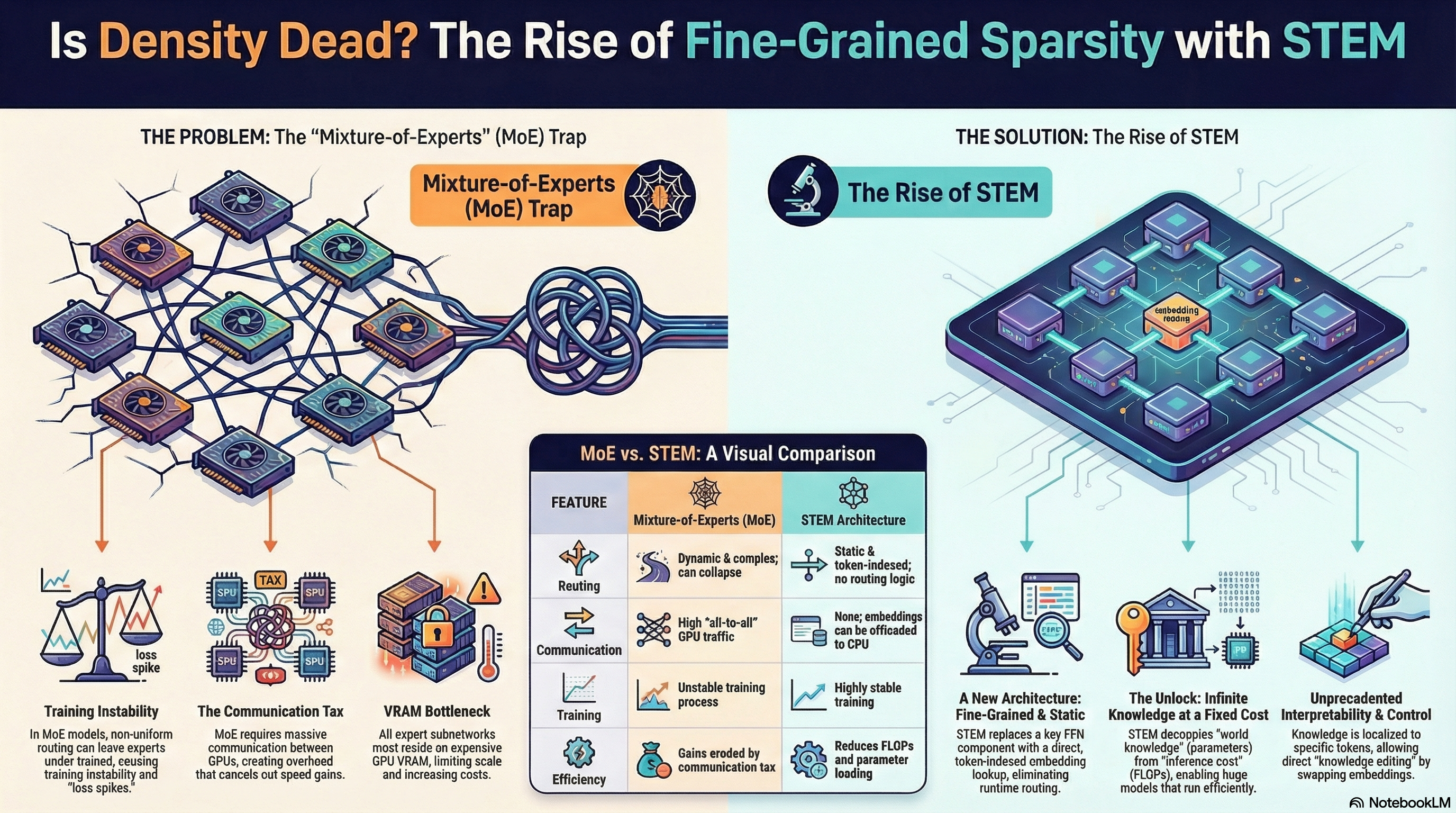

Enter STEM

STEM (Sparse Transformer with Efficient Memory) represents a new class of architectures that build sparsity into the model from the ground up rather than bolting it on after training. The approach works through three mechanisms.

Resampling — Periodically refreshing which neurons are active based on input patterns, ensuring the sparse structure adapts rather than becoming rigid.

Expensive Potentials — Using learned threshold functions that determine, at each layer, which neurons carry enough signal to justify computation.

Grammar Constraints — Structural rules that ensure the sparse activation patterns remain coherent across layers, preventing the kind of incoherent outputs that naive pruning can produce.

Performance is boosted by cumulatively adding these layers of control, each one refining which computations actually matter.

Smaller Models Outperform Giants

The results challenge everything we thought we knew about scaling.

Models with 70 billion parameters using STEM-style fine-grained sparsity are matching or outperforming dense models with 175 billion parameters — over 8x larger — across diverse benchmarks.

This is not a narrow result on a single task. The improvements hold across Python code generation, Text-to-SQL translation, and even molecular synthesis. The breadth matters: it suggests that fine-grained sparsity is not exploiting a quirk of specific benchmarks but capturing something fundamental about how intelligence can be computed more efficiently.

What This Means

The implications ripple outward across every level of the AI ecosystem:

For individuals — Smaller and faster models mean AI reasoning tools that run locally, on personal hardware, without cloud dependencies. The democratization of AI capability is a sparsity story.

For organizations — The economics shift dramatically. If a 70B model matches a 175B model, the infrastructure cost drops by an order of magnitude. Real-time inference becomes practical for applications where latency previously ruled it out.

For the ecosystem — The energy and compute implications are enormous. If the same intelligence can be achieved with a fraction of the activations, the environmental cost of AI drops proportionally.

Density may not be dead. But it is no longer the default assumption. The question has shifted from "how big can we build?" to "how sparse can we go?"

This concludes The Sparsity Revolution series. Related reading: The Art of Forgetting — how the brain's own pruning mechanisms parallel what AI is learning about selective computation.