Amnesia, Not Hallucinations

When an AI agent produces confidently wrong output, the instinct is to call it a hallucination — the model "made something up." But there may be a more precise and more useful diagnosis: the model forgot.

Not forgot in the human sense of a memory fading over time. Forgot in the computational sense of context being compressed, truncated, or overwhelmed to the point where critical information is no longer accessible to the reasoning process.

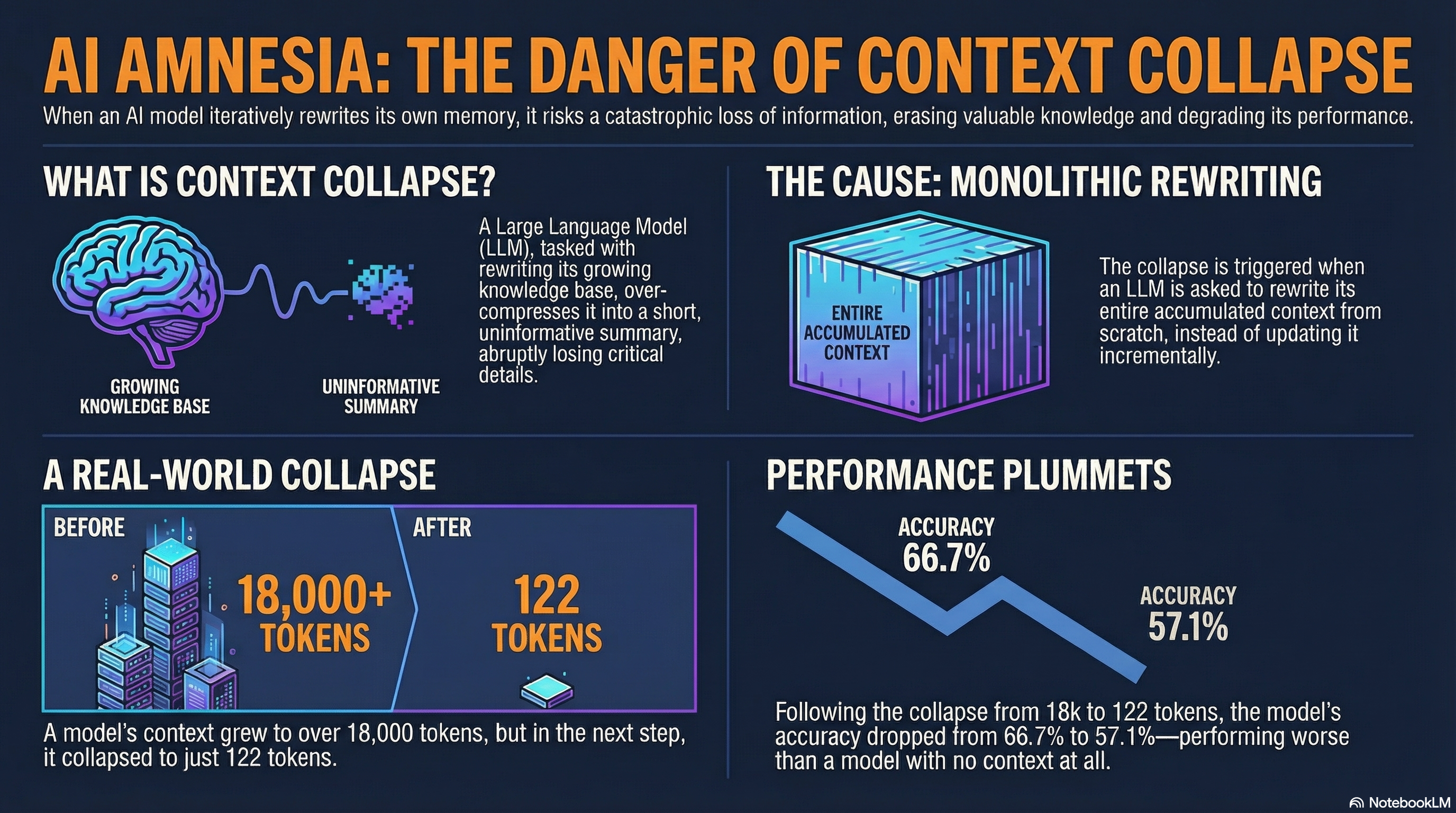

The model probably isn't inventing fiction. It seems to be working with an incomplete picture of reality and doing the best it can. This is context collapse, and understanding it might change how you build with AI.

The Critical Moment

Context collapse doesn't appear to be gradual. Research suggests it behaves more like a phase transition — performance holds steady as context grows, and then at a critical threshold, it drops sharply.

Imagine an LLM tasked with rewriting a growing document. As the document expands, the model handles it well — accuracy stays high, coherence is maintained, the output reflects the full context.

Then, at a specific token count (often far below the model's advertised context window), something seems to break. The model suddenly compresses its growing context into a short, uninformative summary. In one study, accuracy dropped from 66.7% to 57.1% — worse than using no context at all.

That last point is worth pausing on: after context collapse, the model may perform worse than if you had given it nothing. The collapsed context isn't just incomplete — it could be actively misleading, because the model treats its compressed summary as authoritative.

Why Advertised Context Windows Can Mislead

This might be why a model advertising a 128K or even 1M token context window doesn't necessarily mean it can effectively use that much context. The window is a capacity limit, not a quality guarantee.

Effective context — the amount of information the model can actually reason over without degradation — seems to often be a fraction of the advertised window.

The practical implication: if you're feeding an AI agent long documents, conversation histories, or accumulated research, there may be a point at which adding more context makes the output worse, not better. Finding that threshold for your specific use case could be one of the more valuable context engineering skills to develop.

The Organizational Parallel

Context collapse may not be just a technical phenomenon. Organizations seem to experience something similar.

When a team is flooded with information — Slack channels, email threads, meeting notes, dashboards — there's probably a point at which more information reduces rather than improves decision quality. The team can't process it all, so it compresses to heuristics and summaries that lose critical nuance.

The same principle might apply: the solution probably isn't unlimited memory. It might be selective attention — knowing what to retain, what to compress, and what to discard.

Next in this series: Building AI That Learns From Its Mistakes — practical approaches to context engineering that might prevent collapse and preserve learning.